In electrical engineering, frequency compensation is a technique used in amplifiers, and especially in amplifiers employing negative feedback. It usually has two primary goals: To avoid the unintentional creation of positive feedback, which will cause the amplifier to oscillate, and to control overshoot and ringing in the amplifier's step response.

Explanation

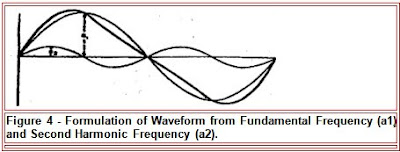

Most amplifiers use negative feedback to trade gain for other desirable properties, such as decreased distortion or improved noise reduction. Ideally, the phase characteristic of an amplifier's frequency response would be constant; however, device limitations make this goal physically unattainable. More particularly, capacitances within the amplifier's gain stages cause the output signal to lag behind the input signal by 90° for each pole they create.[1] If the sum of these phase lags reaches 360°, the output signal will be in phase with the input signal. Feeding back any portion of this output signal to the input when the gain of the amplifier is sufficient will cause the amplifier to oscillate. This is because the feedback signal will reinforce the input signal. That is, the feedback is then positive rather than negative.

Frequency compensation is implemented to avoid this result.

Another goal of frequency compensation is to control the step response of an amplifier circuit as shown in Figure 1. For example, if a step in voltage is input to a voltage amplifier, ideally a step in output voltage would occur. However, the output is not ideal because of the frequency response of the amplifier, and ringing occurs. Several figures of merit to describe the adequacy of step response are in common use. One is the rise time of the output, which ideally would be short. A second is the time for the output to lock into its final value, which again should be short. The success in reaching this lock-in at final value is described by overshoot (how far the response exceeds final value) and settling time (how long the output swings back and forth about its final value). These various measures of the step response usually conflict with one another, requiring optimization methods.

Frequency compensation is implemented to optimize step response, one method being pole splitting.

Use in operational amplifiers

Because operational amplifiers are so ubiquitous and are designed to be used with feedback, the following discussion will be limited to frequency compensation of these devices.

It should be expected that the outputs of even the simplest operational amplifiers will have at least two poles. An unfortunate consequence of this is that at some critical frequency, the phase of the amplifier's output = -180° compared to the phase of its input signal. The amplifier will oscillate if it has a gain of one or more at this critical frequency. This is because (a) the feedback is implemented through the use of an inverting input that adds an additional -180° to the output phase making the total phase shift -360° and (b) the gain is sufficient to induce oscillation.

A more precise statement of this is the following: An operational amplifier will oscillate at the frequency at which its open loop gain equals its closed loop gain if, at that frequency,

1. The open loop gain of the amplifier is ≥ 1 and

2. The difference between the phase of the open loop signal and phase response of the network creating the closed loop output = -180°. Mathematically,

ΦOL – ΦCLnet = -180°

Practice

Frequency compensation is implemented by modifying the gain and phase characteristics of the amplifier's open loop output or of its feedback network, or both, in such a way as to avoid the conditions leading to oscillation. This is usually done by the internal or external use of resistance-capacitance networks.

[edit]Dominant-pole compensation

The method most commonly used is called dominant-pole compensation, which is a form of lag compensation. A pole placed at an appropriate low frequency in the open-loop response reduces the gain of the amplifier to one (0 dB) for a frequency at or just below the location of the next highest frequency pole. The lowest frequency pole is called the dominant pole because it dominates the effect of all of the higher frequency poles. The result is that the difference between the open loop output phase and the phase response of a feedback network having no reactive elements never falls below −180° while the amplifier has a gain of one or more, ensuring stability.

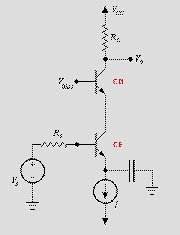

Dominant-pole compensation can be implemented for general purpose operational amplifiers by adding an integrating capacitance to the stage that provides the bulk of the amplifier's gain. This capacitor creates a pole that is set at a frequency low enough to reduce the gain to one (0 dB) at or just below the frequency where the pole next highest in frequency is located. The result is a phase margin of ≈ 45°, depending on the proximity of still higher poles.[2] This margin is sufficient to prevent oscillation in the most commonly used feedback configurations. In addition, dominant-pole compensation allows control of overshoot and ringing in the amplifier step response, which can be a more demanding requirement than the simple need for stability.

Though simple and effective, this kind of conservative dominant pole compensation has two drawbacks:

1. It reduces the bandwidth of the amplifier, thereby reducing available open loop gain at higher frequencies. This, in turn, reduces the amount of feedback available for distortion correction, etc. at higher frequencies.

2. It reduces the amplifier's slew rate. This reduction results from the time it takes the finite current driving the compensated stage to charge the compensating capacitor. The result is the inability of the amplifier to reproduce high amplitude, rapidly changing signals accurately.

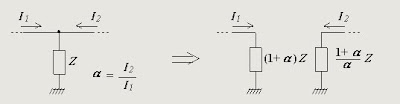

Often, the implementation of dominant-pole compensation results in the phenomenon of Pole splitting. This results in the lowest frequency pole of the uncompensated amplifier "moving" to an even lower frequency to become the dominant pole, and the higher-frequency pole of the uncompensated amplifier "moving" to a higher frequency.

Other methods

Some other compensation methods are: lead compensation, lead–lag compensation and feed-forward compensation.

Lead compensation. Whereas dominant pole compensation places or moves poles in the open loop response, lead compensation places a zero[3] in the open loop response to cancel one of the existing poles.

Lead–lag compensation places both a zero and a pole in the open loop response, with the pole usually being at an open loop gain of less than one.

Feed-forward compensation uses a capacitor to bypass a stage in the amplifier at high frequencies, thereby eliminating the pole that stage creates.

The purpose of these three methods is to allow greater open loop bandwidth while still maintaining amplifier closed loop stability. They are often used to compensate high gain, wide bandwidth amplifiers.

The Dominant Pole approximation

Reduction of a second order system to first order

Consider a second order system with a transfer function that is reduced to first order.

This assumes that a>>b, or that the pole at b is dominant. The coefficient "a" remains in the denominator so that the DC gain (which is also the final value of the output with a unit step input) remains unchanged. Recall that the DC gain is G(0).

The graph below shows the exact response (red) and the dominant pole approximation (green) for a=8 and b=1. Following the graph is Matlab code in which you can set a with b=1 to see how accurate the dominant pole approximation is.

Example

Higher Order

The dominant pole approximation can also be applied to higher order systems. Here we consider a third order system with one real root, and a pair of complex conjugate roots.

In this case the test for the dominant pole compare "a" against "zwn". This is because "zwn" is the real part of the complex conjugate root (we only compare the real parts of the roots when determining dominance because it is the real part that determines how fast the response decreases). Note that the DC gain of the exact system and the two approximate systems are equal.

In the examples and Matlab code below, the second order pole has zeta=0.4 and wn=1 (which yields roots with a real part of 0.4 and an imaginary part of +/-0.92j). There are three graphs. In the first graph a=0.1 (the real pole dominates), in the second graph a=4 (the complex conjugate poles dominate) and in the third graph a=0.4 (neither dominates and the response is obviously more complicated than a simple second order response). In all three graphs the exact response is in red, the approximate response in which the first order pole dominates is in green, and the approximate response in which the second order pole dominates is in blue.

Examples:

Invite your mail contacts to join your friends list with Windows Live Spaces. It's easy! Try it!